Like most technology companies, Octopus collects metrics around how our business and product is doing. We’ve done extraordinarily well over the past few years, so I thought it would be great to give you a glimpse into some of these metrics.

I have split this post into 3 sections:

- Traction

- Conversion

- Typical and largest installations

We collect most of this data from two primary sources:

- Our licenses and orders database - if you start a trial or buy a license, it’s recorded here

- Telemetry (usage statistics) - users can turn this off during setup. We guess that at least half of the installs in the wild have this turned off, but anything we present below is based on what we’ve actually recorded (i.e., if we say there are 6500 active installs, that means the telemetry is telling us 6500 - the real number could be twice as high.

Where a SaaS company can easily see when someone last logged in to their account, or measure the churn rate, we’re at a disadvantage. Octopus is downloadable software, and since we value our users privacy, our telemetry data is the closest thing we have to being able to get an approximation of usage.

Traction

There are over 6,500 “active” installations of Octopus in the wild right now. This comes from our telemetry data - each time an Octopus serve checks for updates, we record the ping. So there are 6,500 instances of Octopus that have checked for updates sometime in the last couple of days (again, many users may have turned this off). Since Octopus is typically installed on a virtual machine/server, it’s not often that these are “forgotten” and left running in the background. The number of active installations has doubled over the past 12 months.

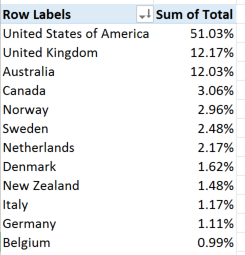

25% of the Fortune 100 companies have bought an Octopus license. We’ve sold Octopus licenses in 62 countries. Our top countries are:

(Per head of population, the Scandinavian countries really love Octopus!)

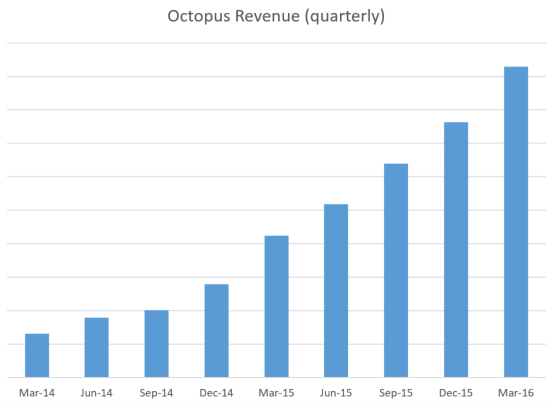

I won’t share our revenue numbers, but the growth looks like this:

Conversion

There were 1008 new trials of Octopus in April this year. March and February were similar. Given that Octopus started as a project in my spare time five years ago, it’s unbelievable to think that every month, over 1000 new people begin an Octopus trial. The number of new trials is also growing too - the previous quarter had 10% more trials than the quarter before.

Before the next part, I should explain that we offer a 45-day free trial, which can then either turn into a paid license, or you can opt to use a free community version (deploying to up to 10 servers).

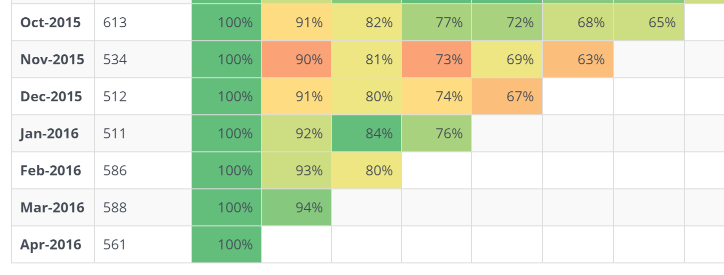

How good are we at getting people to stick with Octopus? Here’s a cohort analysis over our telemetry data:

In January 2016, 511 new instances sent us telemetry data for the first time. Four months later, by April 2016, 76% of the instances that we saw were still sending us telemetry data. Since the 45 day trial would have expired, those that didn’t purchase a license must be using the free version.

(I said that in April 2016, 1008 new trials of Octopus were started. That comes from our licenses database - we know we issued exactly 1008 new trial licenses. The cohort analysis comes from telemetry data, which users are told about and can turn off during the setup wizard, so we only saw telemetry data from 561 new instances.)

In March, we sold 261 new Octopus licenses. If you compare the number of trials we issue each month to the number of new purchased licenses we issue, it hovers somewhere between 23-27% each month.

Putting that together, our funnel looks something like:

- Roughly 1000 people start an Octopus trial in a given month

- Around 75% of them will still be using it four months after the trial expires (mostly community edition)

- 23-27% will eventually buy a license

That’s an extremely good conversion rate as far as I’m concerned.

Our licenses are perpetual, and you can renew the license each year to continue getting new features and support. Our renewal rate currently sits at around 63%. From what I’ve seen, for downloadable software, that’s not terrible, but it’s not amazing either. I think there are a few reasons (excuses?) for this:

- Octopus is the kind of software that you forget about once it’s running. People invest a lot of time setting up their build/deploy pipeline, then once it’s running, they focus on their real project and just let it run.

- The projects that Octopus was brought in to automate deployments for might have wound up, or gone into maintenance mode (Octopus is usually used by individual project teams, not some grand Enterprise plan)

- Where a SaaS company would store your payment details and automatically bill you, we have to email you to ask you to renew - it’s a lot more effort for you to renew.

Typical & largest installations

There are two parts to the telemetry data we collect. By enabling “check for updates”, we at least know that there’s an Octopus server currently active somewhere in the world. But by enabling “include feature usage statistics”, we also get to see what kinds of features each instance is using.

There’s a huge difference between the “typical” Octopus instance, and some of our largest installations. Here’s a typically instance - the median Octopus server:

- 3 environments

- 4 machines (nearly all listening mode)

- 3 projects

- 78 deployments

- 23 package steps, 9 script steps

- 4 users

A larger server (the 75%th percentile):

- 6 environments

- 12 machines (10 listening mode)

- 10 projects

- 382 deployments

- 140 package steps, 120 script steps

- 10 users

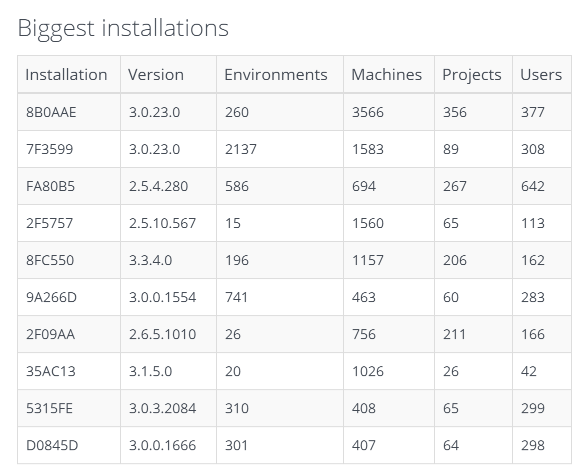

And here are some of our largest servers:

Yes, there’s an Octopus server somewhere in the world that deploys to over 3,566 Tentacles!

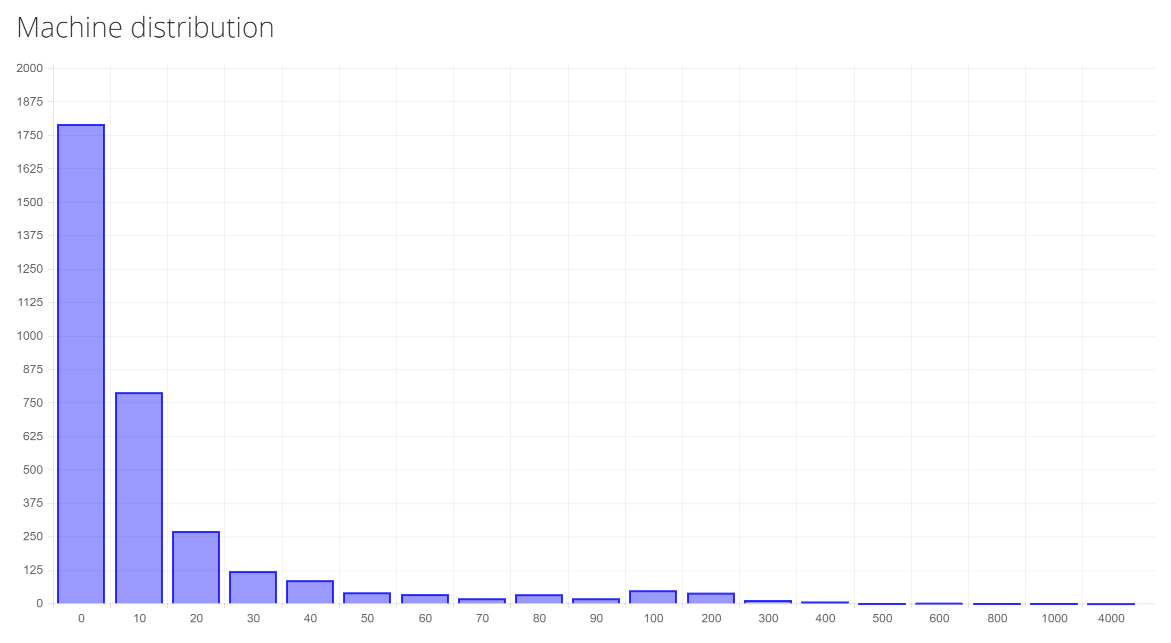

This has quite an impact on how we do feature design. We’ve always assumed most installs have a handful of environments and projects, and for the most part that’s true. But then there’s a very long tail of people with massive installations. Here’s what that tail looks like for machines (X axis is number of machines, Y is number of users - so most people have < 10 machines, some have 10-20, and then a decreasing amount have more).

Summary

When you add up all of the Octopus instances that we have telemetry data on, in aggregate, Octopus has performed over 2.6 million deployments. A thousand people a month are trying Octopus, and most of them stick with it for some time - it’s a very “sticky” product. And there are some incredibly big Octopus servers doing serious deployment automation. I’m so proud that Octopus is doing so well.

The secret to our success has been the amazing community of people who have followed our journey over the last few years. I hope that you’ll enjoy this peek into how we’re tracking!

If there are other numbers you’re interested in, let me know in the comments below. I can’t promise to share everything (hey, we have to have some secrets!) but I’ll do my best :-)