In this post, I want to share some changes that I’m considering to the deployment workflow that Octopus Deploy uses.

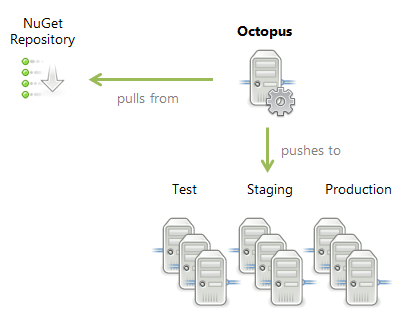

Currently in Octopus, any deployment consists of three stages:

- The Octopus Server downloads all necessary packages from the NuGet feed

- The Octopus Server securely uploads the packages to the required Tentacles

- The deployment steps run (packages are extracted and configured)

This can be summed up in the following diagram:

We do this for a simple reason: packages can be big, and it can take a long time to upload them. We don’t want to deploy one package (for example, a DB schema change), then wait 20 minutes for the upload to complete of the next package before continuing. Instead, we upload everything, then we configure everything.

But there are some downsides to this architecture, which I’ll discuss along with some solutions I’m considering.

Pre-upload steps

The first problem with this architecture is that it’s not currently possible to run any steps (including ad-hoc PowerShell scripts) before the packages are uploaded. This prevents using scripts to do useful tasks like establishing a VPN connection. It would be nice to insert steps that are run before the download/upload activity happens.

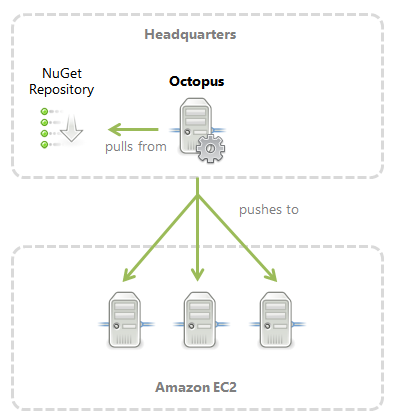

Bandwidth

Since Octopus pushes a copy of each package to the relevant Tentacles, there’s potentially a lot of wasted bandwidth. For example, imagine a scenario where the Octopus server is in a local network, and it is deploying to many machines in the cloud. The same package will be pushed over the internet many times:

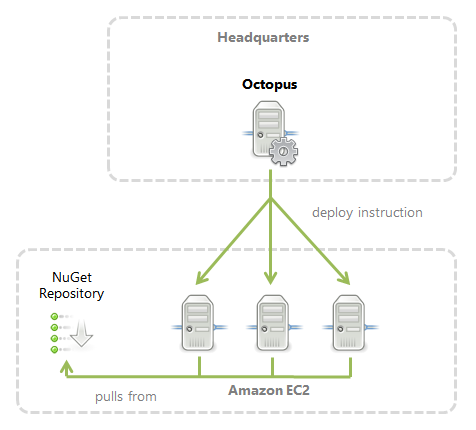

An alternative that would save bandwidth is to replicate the packages to a NuGet server in the cloud and to have each Tentacle pull the package from it:

This architecture could also work nicely when deploying to machines in different data centers using DNS or a distributed file system to ensure the machines pick the NuGet server closest to them.

Improving the deployment process

To support these scenarios, I’m considering two changes to the deployment process.

The first will be the concept of pre-package upload steps, which would be run before NuGet packages are downloaded. You could add PowerShell steps here to establish a VPN connection or perform other tasks before the package download process begins.

The second change would be an option on the package step settings to have Tentacles download packages directly. Instead of Octopus downloading the packages, Octopus would instruct Tentacles to download the packages. The downloads would all happen before the deployment steps are run (again, so that we aren’t waiting to copy a package between deployment steps), but they would go direct to the NuGet server.

Hopefully these changes will make it easier to handle deployments in distributed environments. I’d love to get your feedback on the proposed changes in the comments box below.