A ‘deployment pipeline’ describes the practices and tooling used to deliver software. It’s an essential part of not only DevOps, but also Continuous Integration and Continuous Delivery.

The goal of the deployment pipeline is simple: To get software from your developer and to your users as quickly, often, and smoothly as possible. The best way to achieve that is with automation.

Let’s look at the different stages, methods, and tools to help you build or improve your deployment pipeline.

This is part of a series of articles about Software Deployment.

Get insights into the culture and methods of modern software delivery

Sign up for our fortnightly Continuously Delivered email series to learn from our experiences with Continuous Delivery, Agile, Lean, DevOps, and more.

Sign upBuild pipelines versus deployment pipelines

The word ‘pipeline’ gets used in many different contexts in DevOps, so it’s understandable if there’s confusion about the different uses. This is especially true of ‘build pipelines’ and ‘deployment pipelines’, which share overlap even without the word ‘pipeline’.

A build pipeline is what happens between a developer committing code and the creation of a deployable artifact. As part of Continuous Integration, a build pipeline will help you:

- Compile code

- Test

- Create (or trigger the creation of) a deployable package

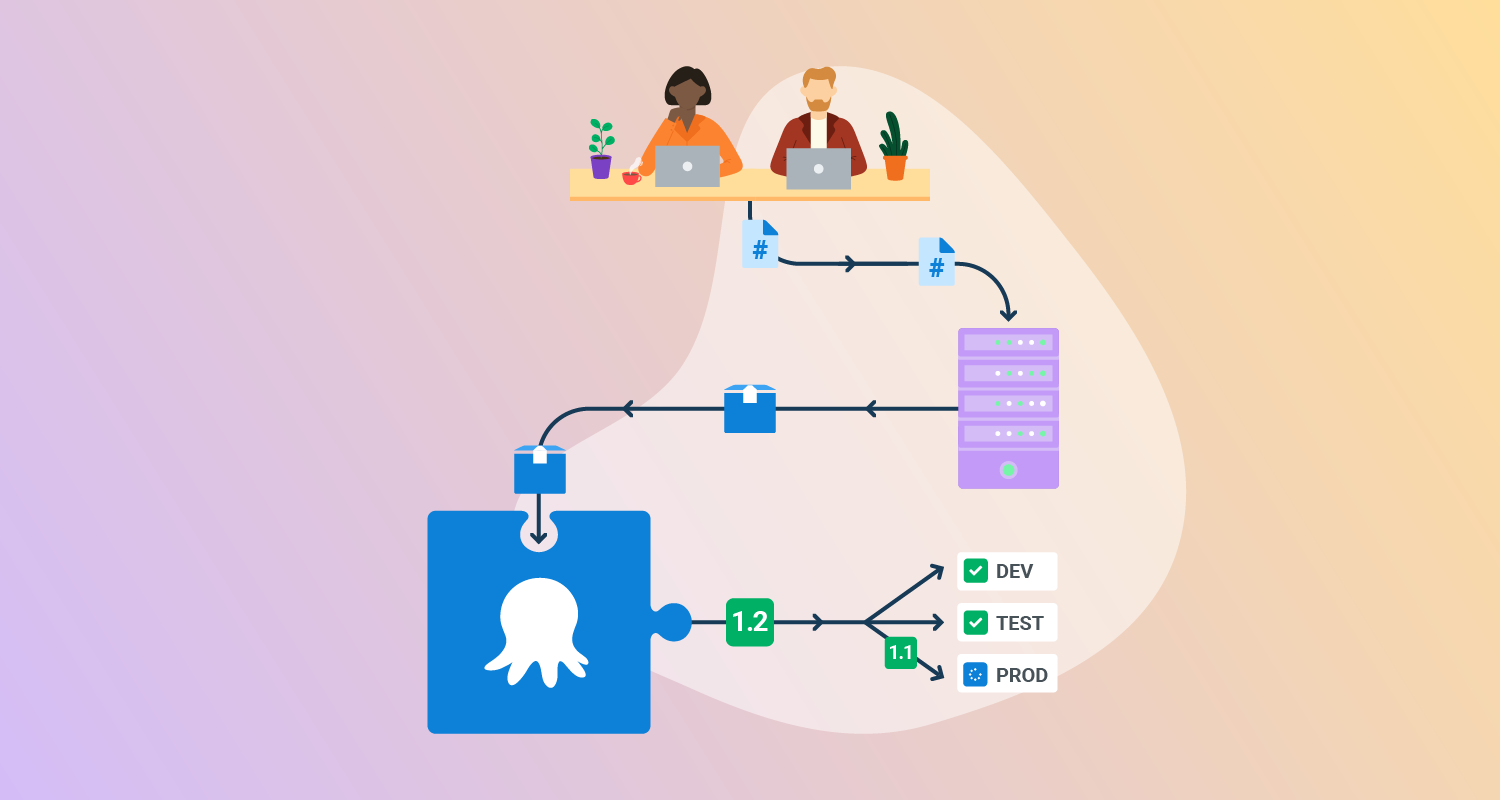

A deployment pipeline starts at the same place, with a developer committing code, but ends much later with a release in your users’ hands.

It not only includes the commit, testing, and building of your code, but also the packaging, and deployment through your environments.

A deployment pipeline is the result of adopting Continuous Integration and Continuous Delivery.

The stages of a deployment pipeline

Using a minimal example, any deployment pipeline should have at least 4 key stages:

- Commit

- Build

- Acceptance

- Production

Let’s look at what happens in each stage.

Commit

When a developer finishes writing code, they commit the changes to the team’s main branch in the code repository.

If using DevOps and Continuous Integration practices correctly, this should happen several times throughout the day. The reason for committing so often is that smaller batches lower the risk of changes breaking the software.

The commit should also trigger the processes in the build stage.

Build

When a developer commits code, your pipeline should allow you to quickly:

- Compile the code

- Test the code for errors

- Provide almost instant feedback to the developer if there are problems

- Package the code for deployment

You should use the same deployable artifact for every deployment of this release, no matter the target or environment.

Acceptance

The acceptance phase means deploying the change to your ‘Developer’ or ‘Test’ environments.

Here, automated tests can check the code for problems that could impact your users in different scenarios. This can include performance or security testing.

Once confident your update is good, you can promote the release through your environment structure for manual testing.

Production

The production phase comes when it’s time to deploy your changes to your live environment (handily also known as ‘production’), so your users can get their hands on the new features or fixes.

From there, you can monitor your production environment for:

- Unexpected problems or bugs

- Problems your users find

- Ways to improve areas of your pipeline through automation, or approval and process changes

Related content: Read our guide to blue/green deployments.

The components of deployment pipeline automation

Of course, to meet DevOps best practices, it’s vital to automate almost everything in your deployment pipeline.

Let’s look at some tools to help you achieve that.

Build server or CI platform

A build server is the biggest time-saver you can add to your deployment pipeline.

Whenever a developer commits code, a build server will automatically complete everything in the build phase, including:

- Compiling code

- Testing according to your software’s needs

- Passing or failing the code (and feeding back to the developer if failed)

- Merging code if it passes testing

- Packaging the code into a deployable artifact (or handing off to a packaging system, depending on your team’s tools)

Traditionally, the only option for build servers was self-hosted options, like the open-source and still-popular Jenkins. Jenkins remains a popular option due to its customization options and scalability.

With new technology comes new choices, however. Increasing in popularity is what we call ‘Continuous Integration as a service.’ This means build functions found in cloud-based build platforms, removing the need for hosting your own infrastructure for builds.

In fact, even most Git repository services now offer build features. GitHub, for example, offers GitHub Actions, which can run build tasks directly from your repo.

Your choice of build server or service should reflect the needs of your software delivery. If your organization enforces location-based cloud storage or insists on on-premises tools, a self-hosted build server is your only option.

Packaging

Packaging tools can automatically turn your successful code into a deployable artifact.

The packaging tool you should use can depend on the language you code in and the target you deploy to. If deploying to containers, for example, you want a tool that creates container images, and usually in Docker’s OCI format.

Given most build tools’ extensibility, many can package your code for you (or at least trigger a build with a dedicated packaging service). However, you also need to think about image storage.

Most packaging options, like Docker Hub, offer registries to catalog and store your images. This way, your team and deployment tools can find what they need easily.

Deployments

There are a few things to consider when choosing your deployment automation tool.

Firstly, what you need might depend on what you’re deploying to and the complexity involved. If deploying a simple app to a single container, most build server solutions can manage your deployment.

Deploying through a build server may not be suitable if you have a complex set of targets or use environments.

Secondly, managing deployments rarely means deploying to one hosting target. Instead, you would deploy to many targets within your environment structure.

Even without DevOps, most developers know the practice of promoting releases through at least 3 environments (though many teams use many more). A minimal environment structure may look like this:

- Development (or ‘dev’): Where developers can quickly deploy and test their updates

- Test (or ‘QA’): For QA teams to test the software as users would experience it

- Production (or ‘prod’): The live environment where people use or access your software

Each environment could have countless different deployment targets within them. For example, you could have:

- Servers in each region you market to, to help with worldwide performance

- Load balancing or high-availability setups

- A mixture of cloud and physical servers to account for the different ways people use or get your software

Build servers don’t see deployment targets in the big picture of your environments. That can make it hard to tell which release has deployed where. A dedicated deployment tool will solve that problem for you.

Related content: Read our guide to canary deployments.

Summary

We explored:

- The definition and goals of a deployment pipeline

- The different phases of a deployment pipeline and what they do

- Ways to help you automate your deployment pipeline and why they’re a good idea

Now you know what a deployment pipeline is, why not create one? Octopus recently developed the Octopus Workflow Builder, which can create a full deployment pipeline in minutes. It:

- Creates a GitHub repository with a sample microservice application.

- Sets up GitHub Actions Workflows to build the sample application and push the artifacts to an Octopus instance.

- Populates an Octopus instance with:

- Environments

- Lifecycles

- Accounts

- Feeds

- Deployment Projects

- Deploys to cloud platforms like EKS, ECS, and Lambdas using Octopus.

Help us continuously improve

Please let us know if you have any feedback about this page.