Vibe coding offered us a tantalizing dream. Have a conversation with AI, forget the code exists, and ship software. This can be a useful way to solve localized and one-off problems. For production systems that need to live, breathe, and evolve for years, it’s a different story.

A new idea called “harness engineering” points to what you need to create production-grade AI-generated code, and the metaphor hidden in the name tells you everything.

The horse and the harness

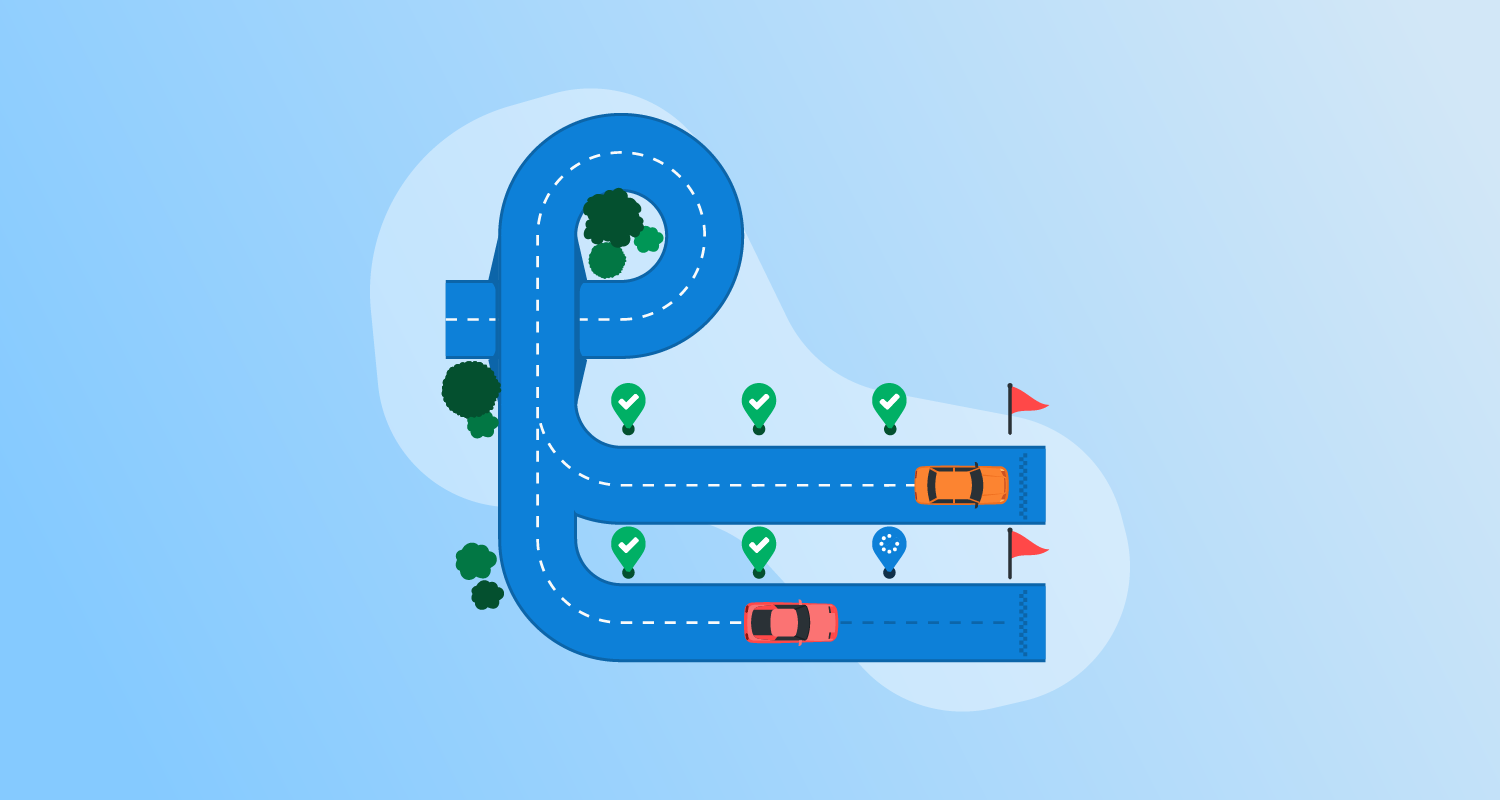

Horses are powerful animals, but left to their own devices, they direct their power toward whatever they want. A harness doesn’t diminish the horse’s strength; it channels it. The human holding the reins isn’t doing the physical work; they’re doing the intelligent work: choosing the destination, reading the terrain, knowing when to slow down.

Harness engineering works the same way. The AI agent is your horse. It’s generative, fast, tireless, and capable of producing large software systems with a small team of engineers directing the work. The harness is the system of tools, constraints, and feedback loops that channels all that raw capability toward something coherent, maintainable, and trustworthy.

When teams attempt to deliver large systems with AI agents without a harness, they typically get run over and trampled by the horse. You’ll know if you’re missing the harness if you find yourself scheduling fixing sessions and tidy-up days as part of your process.

Why vibe coding doesn’t scale

When Andrej Karpathy coined “vibe coding” in early 2025, he was describing something delightfully honest: a mode where you “fully give in to the vibes, embrace exponentials, and forget that the code even exists.”

When you vibe code, you accept all changes without reviewing them and pass any error messages back to the AI to fix. When you use this approach, you let the levels of success and failure push you down different routes. If it won’t do what you want, you flex on your ideas to get something that works.

Karpathy said this works for “throwaway weekend projects,” and a year later, he’s moved on to calling the professional version “agentic engineering.”

The distinction matters. Vibe coding is epistemically passive: you surrender understanding along with the keyboard. Harness engineering is epistemically demanding; it just redirects what you need to understand. Instead of understanding every function, you need to understand the system that constrains how functions get written.

OpenAI created harness engineering as part of an experiment. A team spent 5 months building an internal product with no manually written code. The logic, tests, build configuration, documentation, and observability tooling were all created by AI agents. They created over a million lines of code with 3 engineers and deployed the system to internal users and external alpha testers. They estimate this took 10% of the time it would have taken a team to write the system without AI agents.

The insight from this experiment was that environment legibility is the crucial factor in getting AI agents to deliver software that works. Without this legibility, agents fail as they can’t find or reason about the information needed to complete the task.

The 3 practices that make harness engineering work

Based on OpenAI’s write-up and Birgitta Böckeler’s sharp analysis on the Thoughtworks blog, harness engineering clusters around three categories of practice.

Context engineering

When it comes to currently available AI agents, context is a precious resource. You don’t have an unlimited context window, so you need to be smart with how you use it. Providing a giant agent markdown file filled with instructions only crowds out the real task. Large agent markdown files are also hard to understand and update.

Instead, limit the agent markdown file to a hundred lines and use it as a table of contents. You can link out to individual documents with maps, execution plans, and design specifications. They should be well-organized within the codebase so they are versioned and discoverable.

You can provide dynamic context from observability data, logs, metrics, traces, and information from browser dev tools. That will let the agent detect and fix bugs it introduces.

Architectural constraints

This is where harness engineering most clearly diverges from vibe coding’s “anything goes” philosophy. The OpenAI team enforced a rigid architectural model whereby the business domain was divided into a sequence of fixed layers:

Types → Config → Repo → Service → Runtime → UI

Dependencies can flow only in the direction of the arrows, and cross-cutting concerns like auth, telemetry, and feature flags enter through a single explicit interface called “Providers”. Nothing else is allowed. You should enforce your design with custom linters and structural tests, which you can implement using AI agents.

You can use the error messages in custom enforcement tools to provide remediation instructions so AI agents can fix violations themselves.

Entropy management as garbage collection

Agents are pattern replicators. If you give them a codebase full of inconsistencies, they’ll faithfully reproduce them at scale. You can solve this by running background agents that scan for deviations and automatically fix them. You need to keep the code well factored and consistent to make it legible for future agent runs.

When an agent struggles with a task, you shouldn’t fix it by hand. Identify what capability is missing, and then make it legible and enforceable for the agent. After making adjustments, you get the AI tools to write the fix. This improves the harness and makes it more valuable over time.

The human’s job in harness engineering

With agents handling the coding, humans shift their focus to designing environments, specifying intent, and building feedback loops.

Environment design concerns the arrangement of code, documentation, and constraints. Intent is specified through precise prompts that connect high-level goals to coherent implementations. Feedback loops come from test automation, structural checks, and agent-led reviews, minimizing the need to step in and fix what agents are doing.

The code review burden is managed through mechanical checks built into the harness, with agents handling the initial review. A review is escalated only when human judgment is needed, for example, to approve a novel architectural design. This prevents the code review from becoming a bottleneck.

What this means for the future

In the Platform Engineering community, we have been discussing the role of platform engineers as organizational enablers of AI. Platform teams may create and maintain templates for harness engineering that provide a solid starting point for environment legibility and for providing appropriate constraints. They might even provide shared custom linters and structural testing tools.

Bringing harness engineering into an existing codebase may be more difficult than applying the technique to new systems. There will be fewer clear standards that will weaken the harness. The accumulated entropy of an established code base will pose similar problems to introducing test-driven development or a code linter for the first time.

If harness engineering gains traction, it will encourage the industry to converge on boring, well-established technology choices. Languages and tech stacks with more high-quality samples in training data and years of community Q&A coverage will naturally produce better results than niche tech stacks.

Harness engineering and Continuous Delivery

For teams already practicing Continuous Delivery, harness engineering is a natural fit. The techniques will fit into existing feedback loops and validation practices.

Harness engineering forces teams to adopt long-standing good practices like high-quality documentation, modular design, consistent naming, and capturing architectural decisions. Agents have no tacit knowledge; until it is made explicit, it doesn’t exist.

You’ll need to supplement harness engineering with a robust automated test suite that proves the system works as intended, since the harness constrains how code is written; it doesn’t validate that user needs are satisfied.

Getting started

Building a harness is a continuous process, and it might not prove its value until you’ve completed months of iteration. You should look for existing foundations that will give you a head start, like pre-commit hooks, custom linters, structural testing frameworks, documentation, and test automation.

Each existing foundation can be woven into a harness, which you then iterate on as you identify gaps. By making fixes through the harness rather than directly in the code, you strengthen the harness and make agents more capable. At the same time, the human’s work shifts to the interesting work of choosing the direction and reading the terrain.

Help us continuously improve

Please let us know if you have any feedback about this page.